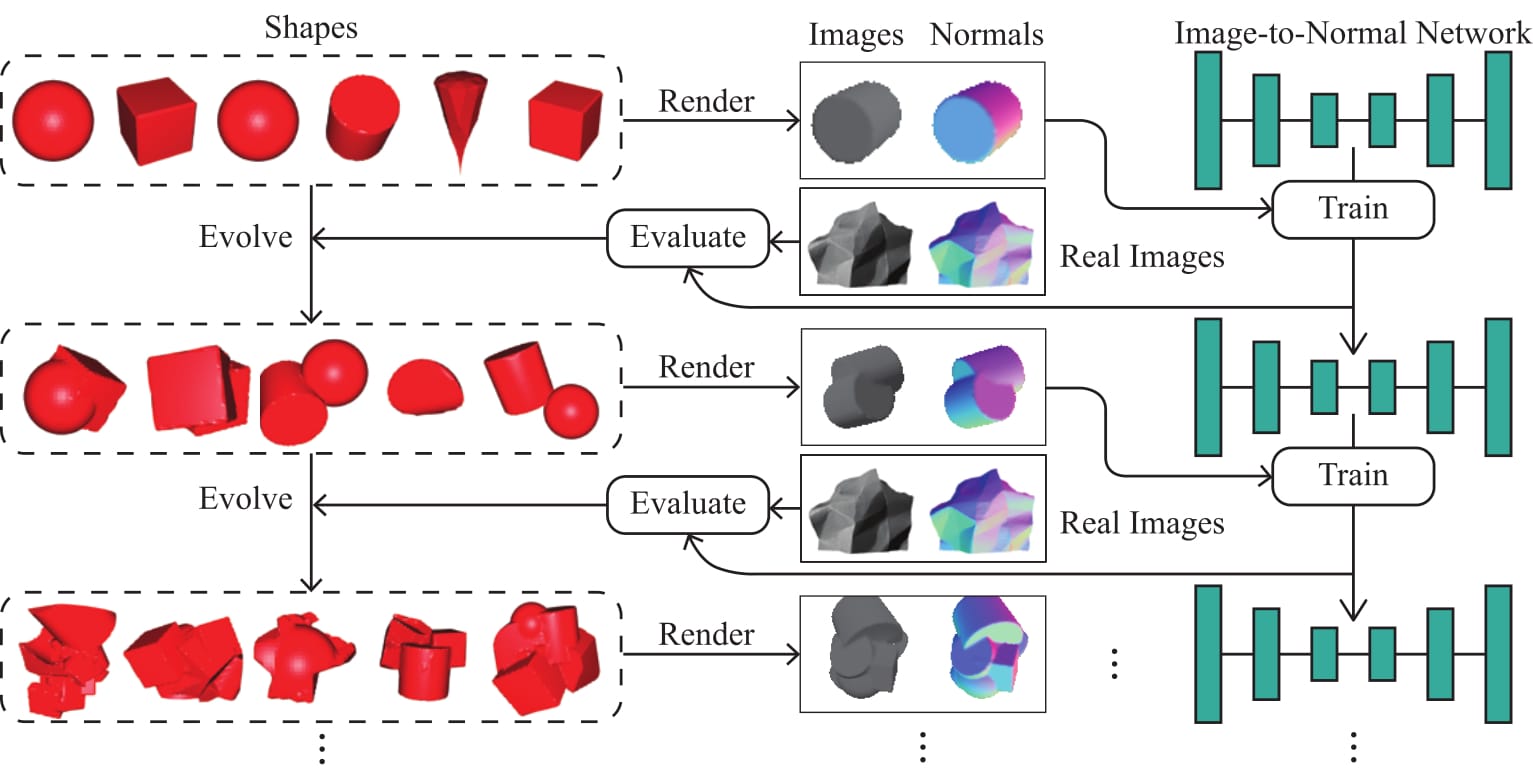

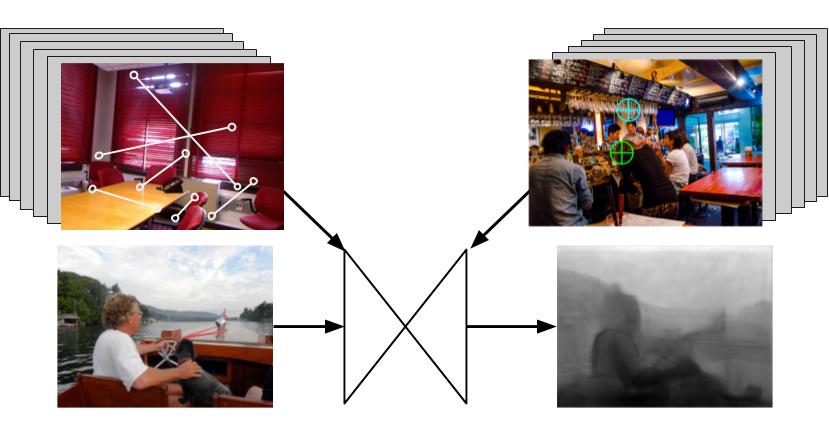

I am a PhD Candidate at the University of Michigan, advised by Prof. Jia Deng and co-advised by Prof. David Fouhey. As a member of Princeton Vision and Learning Lab, I am doing research in computer vision and machine learning. My research focuses on exploiting compute graphics techniques for improving inverse rendering tasks such as 3D reconstruction, and adversarial attacks in 3D domains.