About

I'm a senior studying Computer Engineering at the University of Michigan. I'm interested in Digital Signal Processing with a focus in audio, and it's applications in music and audio, as well as the healthcare technology space and the impact of engineering progress on improving patient care, as well as life outside of the doctor's office.

I'm a pianist and vocalist, and recently picked up the guitar as well, and love to write and sing music of all kinds! I'm also the music director of Maize Mirchi A cappella, and write/arrange a lot of their music.

Professional Experience

Test engineer, Front Panel Display-Link

I worked in the FPD-Link team in Texas Instruments' Santa Clara office as a Test Engineering Intern. FPD-Link is a product line consisting of seralizers and deserializers to transmit high-speed data. I built software tools and scripts to help reduce time spent on the test floor, reducing costs. I also built a cost report for using a Xilinx FPGA board and external hardware to implement the MIPI Alliance D-PHY communication protocol, commonly used in displays and cameras.

Research Assistant

I had a wonderful opportunity to work with the Flat Panel Imager Group at the University of Michigan. Their work involves making a new x-ray imager using poly-silicon that decreases the amount of noise x-ray images have, producing better image quality at a much lower radiation dose to a patient. Higher frame rates of x-ray will also allow a wealth of new possibilities in x-ray technology that can help improve the progress of modern medicine.

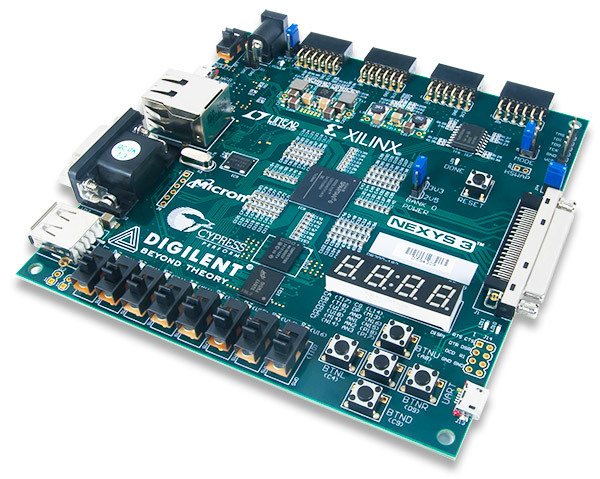

My work involved writing Systemverilog to program FPGA boards to interface with poly-silicon flat panel X-ray arrays and using UART and SPI communications to help aid research team in debugging X-ray communications. I also soldered components onto the lab's custom boards, and tested functionality using multimeters and oscilliscopes. Currently, my work involves working on an Arty embedded system to improve data transfer rates from the pixels in the array. learn more

Software Engineering Intern

HealthPals is a healthcare technology company founded by Dr. Rajesh Dash and Sushant Shankar, working to combine medical science and data science to give doctors medical guideline driven treatments to their patients to reduce medical errors, with a primary focus in the cardiovascular health space.

During my internship, I served as a liaison between the frontend and backend engineering teams in the development of the CLINT product(developed in python and react.js), designed to give value based decisions to clinicians at the point of care. I analyzed several thousand anonymous patient records while providing useful insights on patients' risk for cardiovascular disease by writing a Population Dashboard using javascript, python/pandas, and seaborn. learn more

Projects

Student, Algorithms Engineer

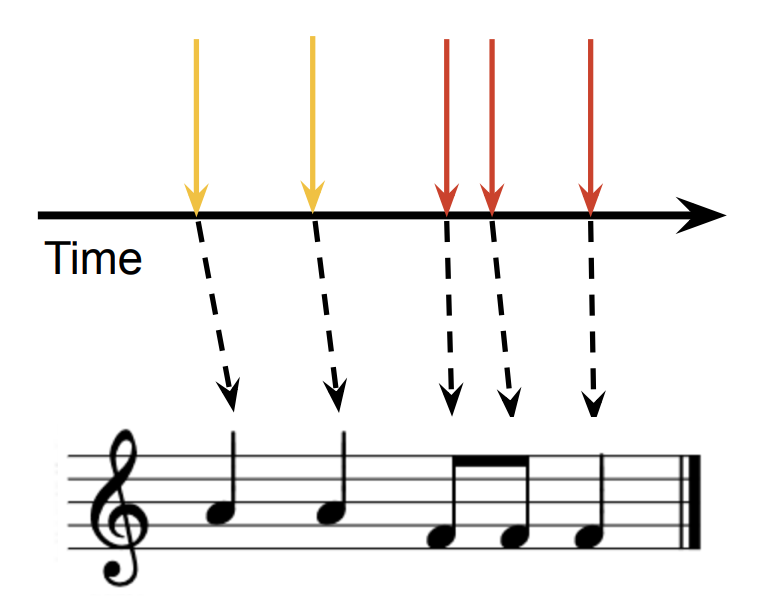

Through EECS 452 (Digital Signal Processing), I worked on a project that processes drum kit audio inputs and classifies them in real time, creating sheet music as it processes inputs. This sheet music includes properly quantized inputs based off of probabalistic priors for common beats (putting an empahsis on eigth and quarter notes over 16th notes, etc).

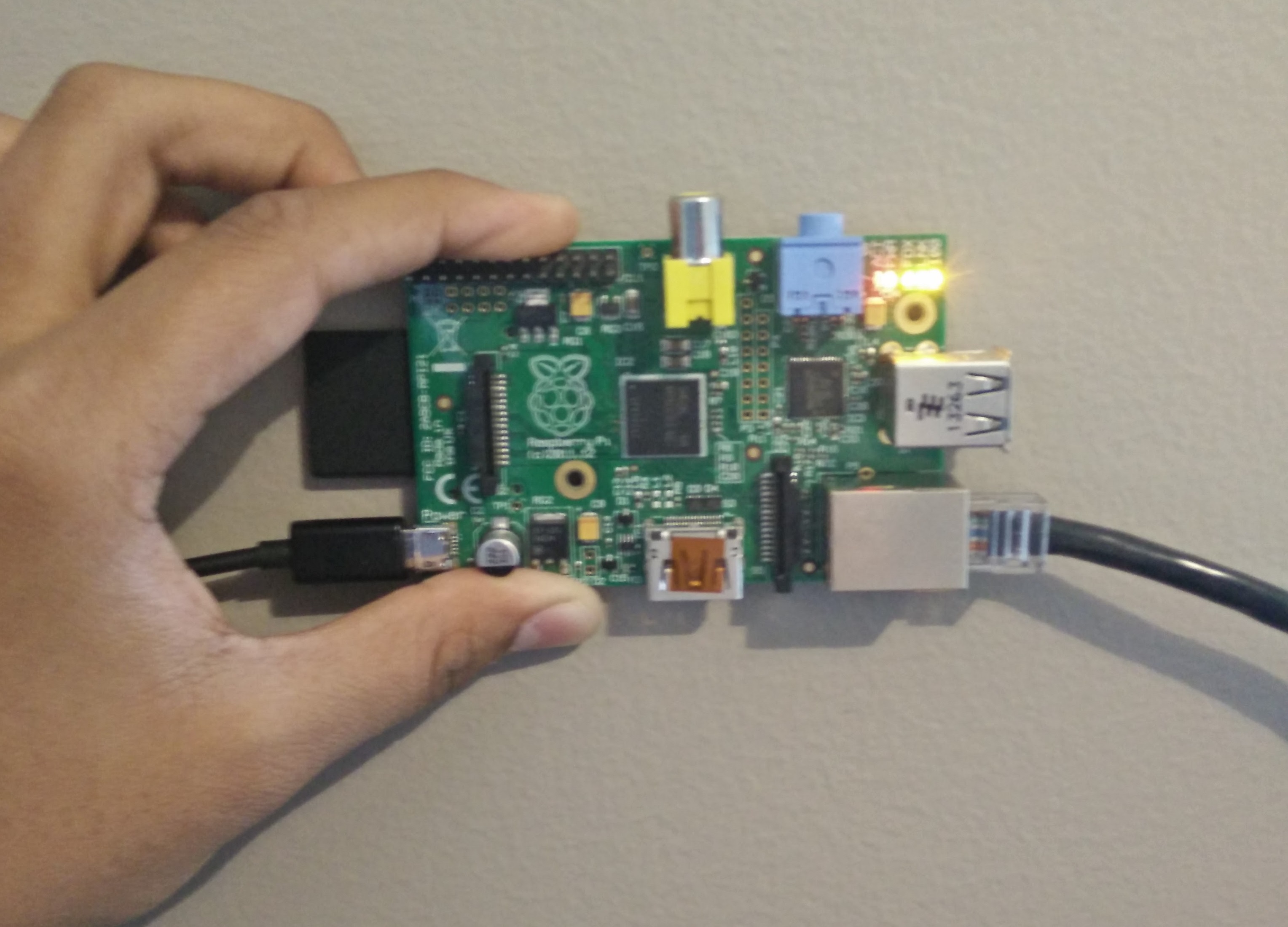

The project uses an STM Nucleo DSP chip to process and classify the input drum hits using Non-Negative Matrix Factorization (NNMF). Once the Nucleo correctly classifies drum hits by their title (snare, hi-hat closed, tom, crash, etc), the system then sends the onsets via UART to a raspberry pi. The Raspberry Pi then "transcribes" the input using known beat priors based off this paper. After determining the correct output, the system generates sheet music using the Guido Music Notation Framework. The project was demoed at the Design Expo on December 5th. You can see our poster and report.

Student, Algorithms Engineer

Through EECS 498/598, I worked on a project involving AES encryption acceleration, as well as attempted to acelerate Homomorphic Encryption, a type of encryption. The abstract of the report is below, and you can find the full report here.

In this paper we first discuss a failed attempt to create a homomorphic encryption matrix-multiply accelerator, followed by a successful attempt to build an AES accelerator. Homomorphic encryption is a type of encryption scheme based on the rings with learnings error assumption (RWLE) that allows for bit-wise operations inthe encrypted domain. This opens interesting opportunities in cloud computing on sensitive data, such as AI on health data. Due to a lack of understanding of abstract algebra, we pivoted away from homomorphic encryption accelerator for the aforementioned AES accelerator. The Advanced Encryption Standard (AES) has beenused widely throughout industry, research, and govern-ment [10]. Because of it’s wide use, there is always a need to accelerate it. In this paper, we implement and test an FPGA accelerator that speeds up AES-128 Encryption. We compare our results with a software implmentation of the AES algorithm, and with Intel’s Intel® Advanced Encryption Standard (AES) New Instructions (AES-NI) as well, which the current industry standard for AES acceleration.

Student, Algorithms Engineer

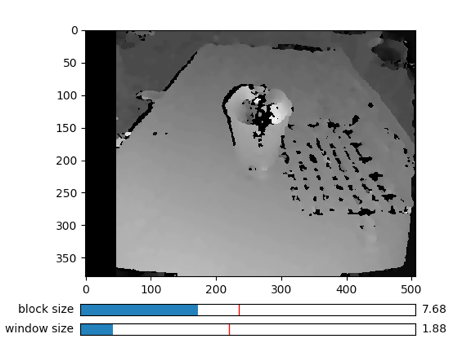

Through EECS 442 (Computer Vision), I had to work on a final project with a partner that was self-guided. I decided to work on a project that tried to do structure from motion, a process that creates 3D visualizations from 2D images. An abstract of the document is below.

We pose two methods to construct a 3D point cloud of an object given a set of images of said object at various angles around it. This concept is called structure from motion, and we would like to implement a basic version of it for small objects in a confined space. We will do this by generating a 3D cloud from the relationship between the camera’s intrinsic parameters and the world’s extrinsic parameters. The first method involves creating disparity maps between stereo images to make dense clouds and the second method involves using epipolar lines and feature extraction to create sparse, more accurate 3D clouds. Our full document is shown here.

Student, Algorithms Engineer

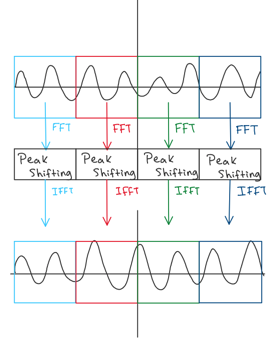

In the EECS 351 Digital Signal Processing Class, we had a final term project where we got a chance to use the signal processing techniques learned in class on a project we designed and tested. For this project, I tried to extend previous work on the MAX MSP Vocal Harmonizer from the Michigan Project Music Makeathon. Rather than using MAX MSP, we used MATLAB and were able to develop our own pitch shifting algorithm using the work from other papers such as Jean LaRoche's New Phase-Vocoder Techniques for Pitch Shifting, Harmonizing and Other Exotic Effects. Using this, we attempt to create a real-time solution that can take input melodies and MIDI notes to create harmonies! We outline our initial work on our website here, yet more work is currently being done to port this project into a Virtual Studio Technology (VST) so that it can be used in professional audio workstations by anyone just by dragging and dropping a file. learn more

Student, Hardware Engineer

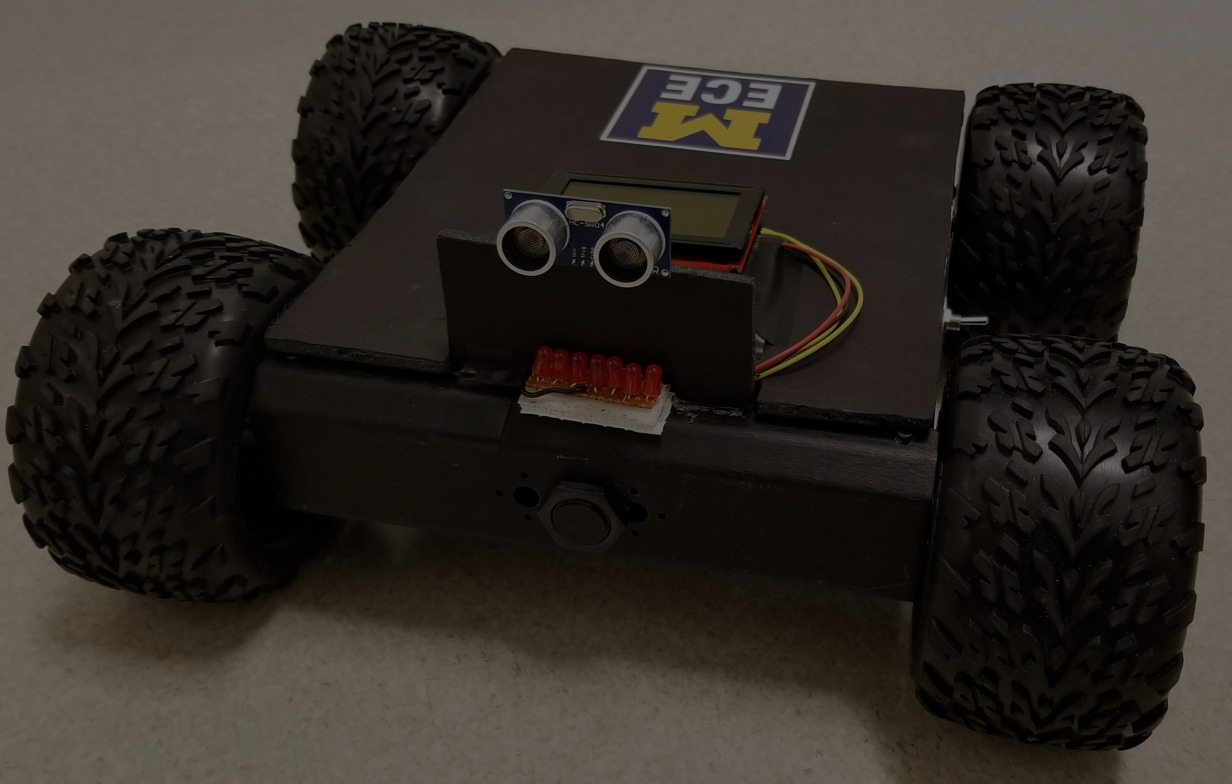

Buddy bot is a following robot designed to help carry objects around the house for you. It was designed in the EECS 373 Embedded Systems Class, where I got a chance to work on an Embedded systems project with a team. While just a prototype built in 3 weeks, we wanted to try and design something that could actually be useful in a scenario where someone eldery may need more assistance in a home, without having to carry potentially heavy objects. Buddy bot follows an IR-emitting anklet strapped around one's ankle. We used the Smartfusion SoC with an onboard FGPA and a ARM Cortex-M3 processor. Our team designed, built and tested the device, writing hardware components in Verilog and interfacing with the hardware in C. For more information, check out our webpage.

Algorithms Subteam

VIDEO from Design Expo

The goal of Maestro 2018 is to build a virtual conducting system for the University of Michigan’s School of Music, Theatre, and Dance to assist new conductors in practicing their art. Traditionally, new conductors attend active classes where an instructor watches them conduct and provides feedback. To practice outside the classroom, they either practice silently in front of a mirror or must recruit the help of musicians. With Maestro, conductors practice with a computer-simulated ensemble, thereby eliminating the need for live musicians as well as the stress that comes with performing in front of them. New conducting students would use this system in tandem with a traditional classroom experience.

Most of my work involved data collection of various music conductors gestures at various dynamic levels(loudness), articulation(style of sound), and tempos(beats per minute). Using this data of almost 14 conductors, I helped design signal processing algorithms to interpret those gestures using an Inertial Measurement Unit that measures rotation, acceleration, and gyroscope data. My work involved using Matlab and Python/Pandas to write data analysis and apply transforms on sampled data. Later on, I worked on integrating the system to the Apple ecosystem, converting the algorithms into real-time using swift to be processed using an Apple iPhone's IMU and a Mac computer for sound synthesis.

The project was conducted through the univeristy's Multidisciplinary Design Program, a program designed to give students a chance to work on teams of people from various backgrounds, give technical presentations, papers, and work with a sponsor(in our case Dr. Andrea Brown) and their varying needs.

Space Knobs Vocal Harmonizer

I got a chance to participate in Michigan Project Music's inaugural music makeathon, where groups got a chance to build an exprimental "instrument" in 18 hours. It was a really cool experience where I learned a lot about Max/MSP and some cool signal processing concepts and apply them to music.

I worked on a live vocal harmonization tool, controlled by knobs(whose data was recorded on potentiometers connected to an arduino). Each knob is mapped to a "voice", and quantized to a specified scale(major, minor, blues, etc) and as you sing into the microphone "replicas" of your voice are generated using an algorithm used to mimic tape deck shifting. The program was written in Max/MSP, and allowed for input through either the knobs or also an optional MIDI controller you could plug in to input notes similar to Jacob Collier and Snarky Puppy in "Don't You Know". You can see a video of the project here.

Pilot Student

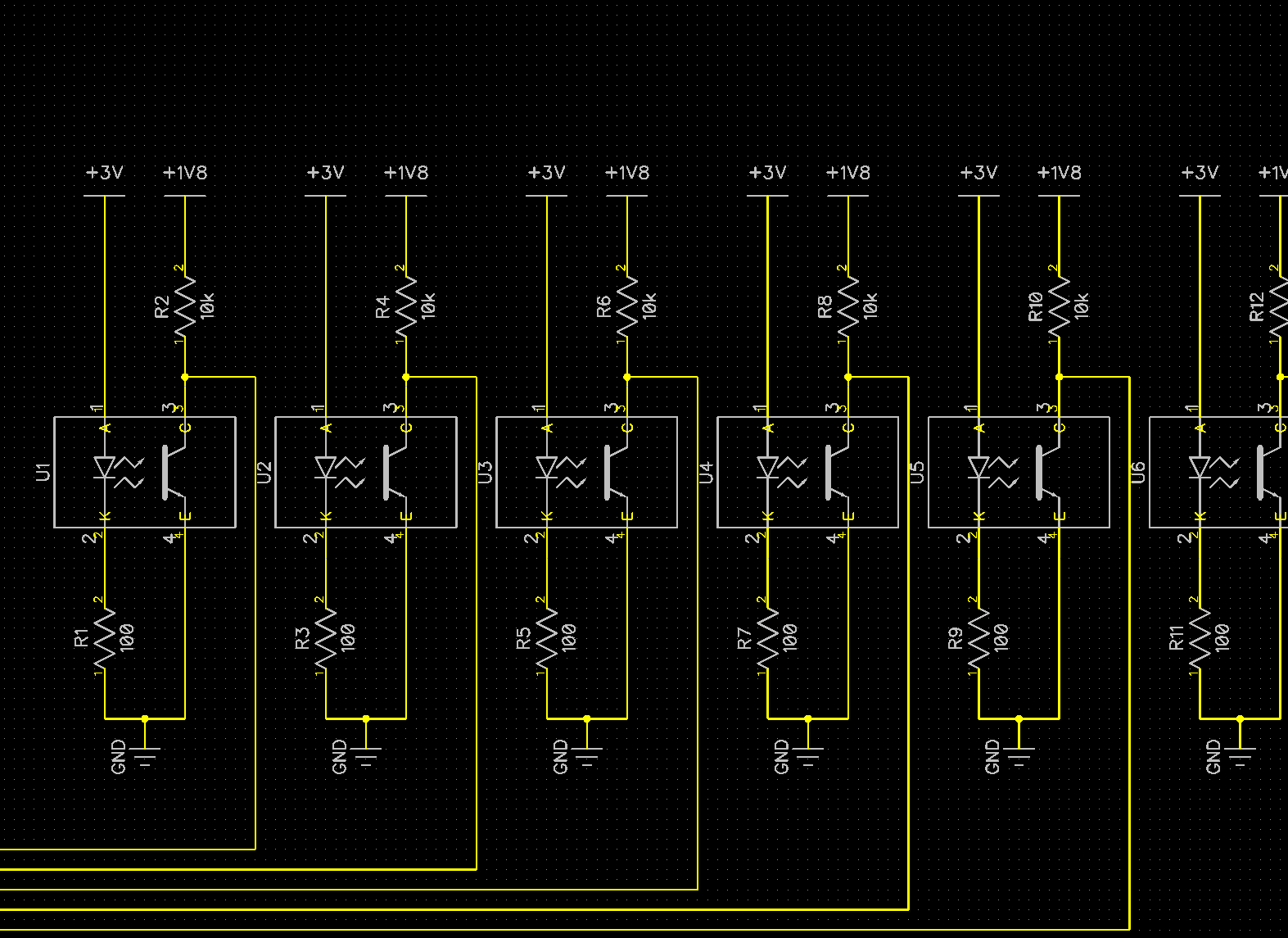

I took part as a pilot student testing a new program for graduate students. As a part of the course, I designed, built, and tested an autonomous line-following robot using a beaglebone, IMUs, a custom line-sensing PCB module.

Although I didn't get a chance to fully complete the project with my group in the timeline we had, I got a chance to fully design and test a PCB module using the DipTrace software and sending the designs into OshPark. I also got a chance to learn about 3D modeling using SolidWorks and how to use a laser cutter and 3D printer to build the components necessary for the robot.

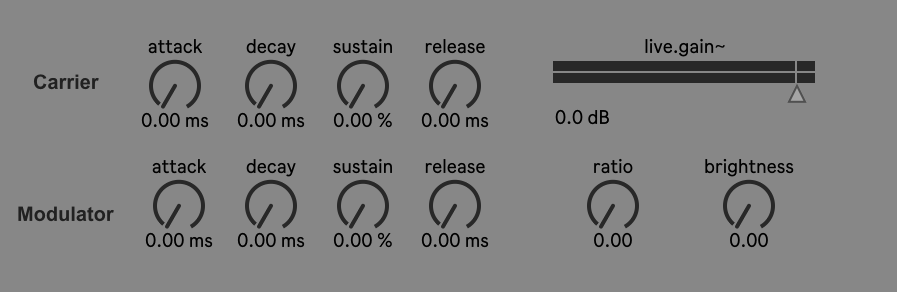

Polyphonic Frequency Modulation Synthesizer

Wrote a Frequency Modulation Synth that modulates a sine wave input with an ADSR(attack decay sustain release) with a modulator that also has an ADSR along with a "brightness" and "ratio" knob in Max MSP. I used this to do a live performance in my PAT 201(Performing arts technology) class, and used the output of the synth as a vocoder input as I sang live into the microphone. I've linked the maxpat here.

Electronics Side Project

I developed a RESTful API for my personal garage using Twilio, Node.js and a Raspberry Pi that controlled the garage via web application, text message or android application for easy access to the house. Read the full documentation here.

Class Project

I got a chance to take a 3D printing class through the Entrepreneurship department at the University of Michigan. I took the class ENTR 390.006: Intro to Entrepreneurial Design. Through the class, I got a chance to learn about 3D modeling in Fusion360, converting those files into stl files and then utlimately into gcode to print 3D models using MakerBots using PLA material. I also learned about photogrammetry, the process of converting a series of rotated images into a 3D model algorithmically. You can see a picture of a 3D model of my shoe using this method to the left. Throughout the class, I experimented with printing static models such as a turtle as well as dynamic "moving parts" using gears, including a basic door hinge. You can print those models here.

Electronics Engineer

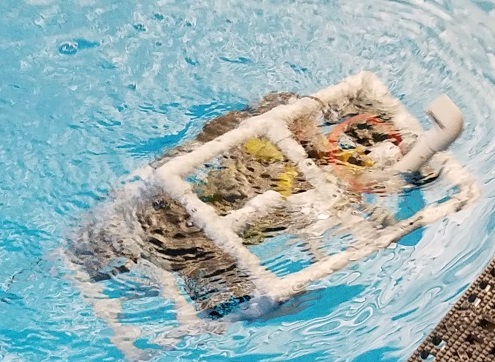

As a part of the Engineering 100 class at the University, me and a a group of fellow engineers were tasked with the creation of an underwater ROV that had a remote control system, a bluetooth camera module, and a hook to allow for interaction with its surroundings. After one semester of work we designed, built and tested the ROV in preparation for a competition at the end of the semester, where we pitted our ROV against other groups in a design challenge.

My contribution to the team was to use Arduinos and RF transmitters/receivers to build a remote control and bluetooth camera system allowed complete control and vision of the underwater ROV, and help build the final product using PVC. Through the class, I learned technical communication skills and how to write technical reports. A detailed technical report of this project can be found here.

Music

Maize Mirchi A Cappella

I am very proud to be a major part in the recording, tracking and arranging process of a full length album with Maize Mirchi A Cappella! The album is a culmination of our work from the past two years and features much of the music that I arranged for the group during my time as music director. It will feature eight of our best songs, and will be coming out later this year! For more info, check out our Spotify Page with the recent release of two separate singles 'Zehnaseeb' and 'Piya o Re Piya'.

Maize Mirchi A Cappella

I was part of the recording and tracking process of Maize Mirchi's latest EP, Silent Call. The album is the studio recorded version of our ICCA competition (did someone say pitch perfect?) set that we took all the way to the semifinals, as well as the finals(and ultimately 2nd place ) of All American Awaaz. Special thanks to Liquid 5th Productions for helping us record, mix and master!

https://soundcloud.com/prakash-kumar-34

I've recently started putting some of my music/covers on soundcloud. My classwork from PAT 201/202 haven't been put up yet, but I plan to start putting all of my work on soundcloud and upcoming studio projects up there too!

Coursework

- EECS 551: Matrix Methods for Signal Processing and Machine Learning

- EECS 452: Digital Signal Processing Design Lab

- EECS 598/498: Accelerated Systems for AI and health

- TC/ENGR 456: Upper level Technical Communications

- EECS 598: Optimization Methods in Signal and Image Processing and Machine Learning

- EECS 216: Signals and Systems

- EECS 301: Probability for Electrical Engineers

- EECS 498: Joint class for Multidisciplinary Design Program

- PAT 202: Computer Music

- Technical Communications 300 for Electrical and Computer Science

- Musicology 345: History of Music

- EECS 351: Digital Signal Processing

- EECS 373: Embedded Systems

- EECS 442: Computer Vision

- 2cr. Mentorship for MDP minor

- EECS 270: Introduction to Logic Design

- EECS 281: Data Structures and Algorithms

- EECS 398: Computing for Computer SCientists

- PHYS 240/241: Physics II(Electricity and Magnetism) and Lab

- EECS 215: Circuits

- EECS 370: Computer Architecture

- EECS 498: Joint class for Multidisciplinary Design Program

- PAT 201: Introduction to Computer Music

- EECS 203: Discrete Mathematics

- ENGR 151: Accelerated Introduction to Programming

- ENTR 407: Entrepreneurship Hour

- RCHUMS 353: Introduction to Fundamental Electronic Music History

- CHEM 125/126: General Chemistry Lab

- CHEM 130: General Chemistry Principles

- EECS 280: Programming and Data Structures

- ENGR 100: Introduction to Engineering Underwater Vehicle Design

- ENTR: 390 Special Topics in Entrepeneurial Design: 3D Printing Section